The meeting usually starts the same way.

A few smart people gather around a whiteboard, a conference table, or an Excel spreadsheet with a title like AI Demand. Someone has a soda. Someone has coffee. Someone has a marker. Someone says, “We need to figure out how to start building AI solutions.”

And then, almost like muscle memory, the first idea appears:

Chatbot.

A chatbot for employees to ask questions about their work tickets.

A chatbot for equipment documentation.

A chatbot for policies, PDFs, invoices, contracts, benefits, inventory, compliance rules, church potluck sign-ups, or whatever sacred or secular pile of chaos happens to be sitting in the corner.

It is not a bad instinct.

Chatbots are useful. They give people a place to ask. They lower friction. They make buried information feel less buried.

But a chatbot is not an agent.

That distinction matters.

A chatbot knows.

An agent does.

A chatbot explains the process. An agent moves through the process.

A chatbot tells you what the next step is. An agent can help take the next step, ask for permission when it reaches a boundary, leave a record behind, and come back with something closer to done.

That is the mind shift many teams are still struggling to make. They are still living in “Copilot chat mode,” where AI is primarily an answer box attached to documents. But agents are not just better answer boxes. At their best, they can carry more of a workflow from beginning to end.

Once that becomes true, the whiteboard question changes.

It is no longer:

“What information is the user missing?”

It becomes:

“Where is work stuck?”

Followed quickly by:

“Do we have a documented process for unsticking it?”

And then:

“How would we know, quantitatively or operationally, whether the right thing was done?”

Those are very different questions.

They are also where the money is moving.

The Money Is Moving Toward Workflows

The clearest signal is finance.

OpenAI has already made its finance push visible. Its collaboration with PwC is explicitly focused on the office of the CFO, including workflows for planning, forecasting, reporting, procurement, payments, treasury, tax, and the accounting close. OpenAI also says its own finance organization is acting as “customer zero” for agentic finance workflows, using that environment to validate governance models, runtime controls, and human-agent collaboration patterns.

OpenAI has also introduced ChatGPT for Excel and financial data integrations aimed at modeling, analysis, research, diligence, underwriting, and related workflows. The important part is not simply that AI is entering spreadsheets. The important part is that AI is being placed inside the tools, data sources, formulas, review patterns, and approval loops that finance teams already use.

Even OpenAI’s personal finance experience points in the same direction. Users can connect financial accounts, ask questions grounded in their own financial context, and view balances, transactions, investments, and liabilities. OpenAI says the system cannot see full account numbers or make changes to accounts, which is exactly the kind of boundary that matters when AI moves from answering to acting.

And this is not just OpenAI.

Anthropic has released ready-to-run financial services agent templates for work such as pitchbooks, KYC screening, earnings review, model building, valuation review, general ledger reconciliation, month-end close, and statement audit. These are not generic chatbots with finance branding. Anthropic describes them as agent templates packaged with skills, connectors, subagents, and approval-flow adaptability.

Anthropic also announced a new enterprise AI services company with Blackstone, Hellman & Friedman, and Goldman Sachs to bring Claude into core business operations at mid-sized companies. The interesting part of that announcement is not just the names involved. It is the delivery model: engineers working close to the customer, studying operations, and building systems around the workflows people actually use.

The market is not merely asking, “Can AI answer questions?”

It is asking, “Can AI help finish work?”

Why Finance Is An Obvious Early Market

Finance is attractive for agentic AI because the work is unusually agent-shaped.

- It has structured artifacts.

- It has recurring deadlines.

- It has spreadsheets.

- It has source systems.

- It has approvals.

- It has controls.

- It has exceptions.

- It has measurable outcomes.

- It has people spending long afternoons reconciling numbers, refreshing models, checking variances, drafting memos, preparing reports, and trying to determine whether something is wrong, late, misstated, missing, or merely weird.

That is exactly the kind of environment where agents become interesting.

Not because finance work is simple. It is not.

And not because finance professionals are replaceable. They are not.

Finance is attractive because much of the work involves bounded judgment: judgment exercised inside rules, policies, reconciliations, deadlines, source documents, and review paths.

Agents are most useful where the work is not purely creative, not purely mechanical, and not purely conversational. They are useful where there is a repeatable process, enough data to evaluate the result, and a human who still owns the final judgment.

Software development taught a similar lesson.

Over the last couple of years, coding assistants and agents moved quickly from novelty to serious participation in real engineering workflows. That does not mean software developers are obsolete. It means the generic coding-assistant market became crowded very quickly.

Once a category becomes obvious, the competition changes.

It is no longer enough to say, “We built an AI that can help with code.”

The questions become harder:

Who has the best model?

Who has distribution?

Who is already inside the developer workflow?

Who has enterprise trust?

Who can integrate with the systems of record?

Who can survive the platform squeeze?

The same thing is now happening in finance.

There is gold there.

But that is exactly why a smaller builder should be cautious about running straight at it.

When OpenAI, Anthropic, PwC, Blackstone, Goldman Sachs, and the rest of the financial-industrial constellation are already putting models, engineers, capital, workflows, data access, and distribution behind finance agents, the question is not:

“Is finance a big market?”

Of course it is.

The better question is:

“What can I build that they cannot easily absorb, copy, bundle, or out-distribute?”

That is a colder question.

But it is a better one.

Generic Wrappers Are Being Squeezed

Generic AI wrappers are being squeezed from every direction. Frontier labs are moving down the stack Consultancies are moving up the stack. Systems of record are adding agent interfaces. Private equity is becoming a distribution channel. Enterprise software vendors are embedding AI into products customers already own.

That squeeze should change how we brainstorm AI ideas.

Most teams still ask broad, cloudy questions:

“Can AI help with field workers?”

“Can AI help with support?”

“Can AI help with finance?”

“Can AI help with operations?”

Those questions are too big. They produce demos. They do not reliably produce durable systems. The better question is smaller and more stubborn:

“What specific business object does this agent understand?”

A business object like:

- A support ticket

- A claim

- A permit

- A purchase order

- A care plan

- A field service visit

- A renewal

- A compliance exception

- A student intervention plan

- A donor follow-up

- A construction change order

Generic intelligence becomes valuable when it attaches itself to the real nouns and verbs of a business.

What object is being created, reviewed, routed, updated, approved, rejected, reconciled, escalated, or closed?

How close does the agent sit to that object?

Does it know the data model?

Does it understand the rules?

Can it see the relevant context?

Can it take a bounded action?

Can a human review the final move?

Can we measure whether the outcome improved?

That is where agents become useful. They understand the nouns of the business and act carefully on the verbs.

That is why the opportunity is not:

“Build an AI chatbot for X.”

The opportunity is:

“Find the unfinished work.”

Find The Unfinished Work

Start with work where three things are already present.

- There is a business object that has a data model.

- There is a process.

- There is a way to tell whether the result was good.

That does not mean the process is perfect. It rarely is. But there needs to be enough structure for an agent to participate without becoming a liability.

Then look for the work that lives between systems.

The work people delay because it is tedious, fragmented, politically annoying, or just boring enough that no one wants to admit how much time it consumes.

The work where a person still needs to approve the final move, but should not have to babysit every intermediate step.

The work where the AI can help with repeatable analysis, preparation, routing, checking, drafting, reconciliation, summarization, or exception detection while a human retains responsibility for judgment.

That last part matters.

A chatbot can be 80 percent helpful and still feel magical. An agent that takes action at 80 percent can become dangerous.

Once the system writes, files, spends, routes, escalates, deletes, schedules, updates, commits, or approves, the game changes.

That is why the implementation layer matters.

The Implementation Layer Is The Product

The implementation layer includes process design, data access, delegated authority, evaluations, audit trails, recovery paths, and ongoing ownership.

Those are not boring details.

They are the difference between that makes you successful in a production system.

Workflow design means deciding which decisions the model can make, which steps stay human, where handoffs happen, and what counts as done.

It also means answering harder questions:

Which system is authoritative?

What is the agent allowed to read?

What is it allowed to change?

When must it ask for permission?

How are outputs evaluated?

What gets logged?

What happens when the agent is wrong?

Who owns recovery?

Who owns the agent after launch?

This is where many AI brainstorming sessions go sideways:

- They start with the model.

- Then they talk about document chunking.

- Then they talk about the interface.

- Then they talk about use cases.

- They should start with the mess.

Start With The Mess

Ask where the handoff is breaking.

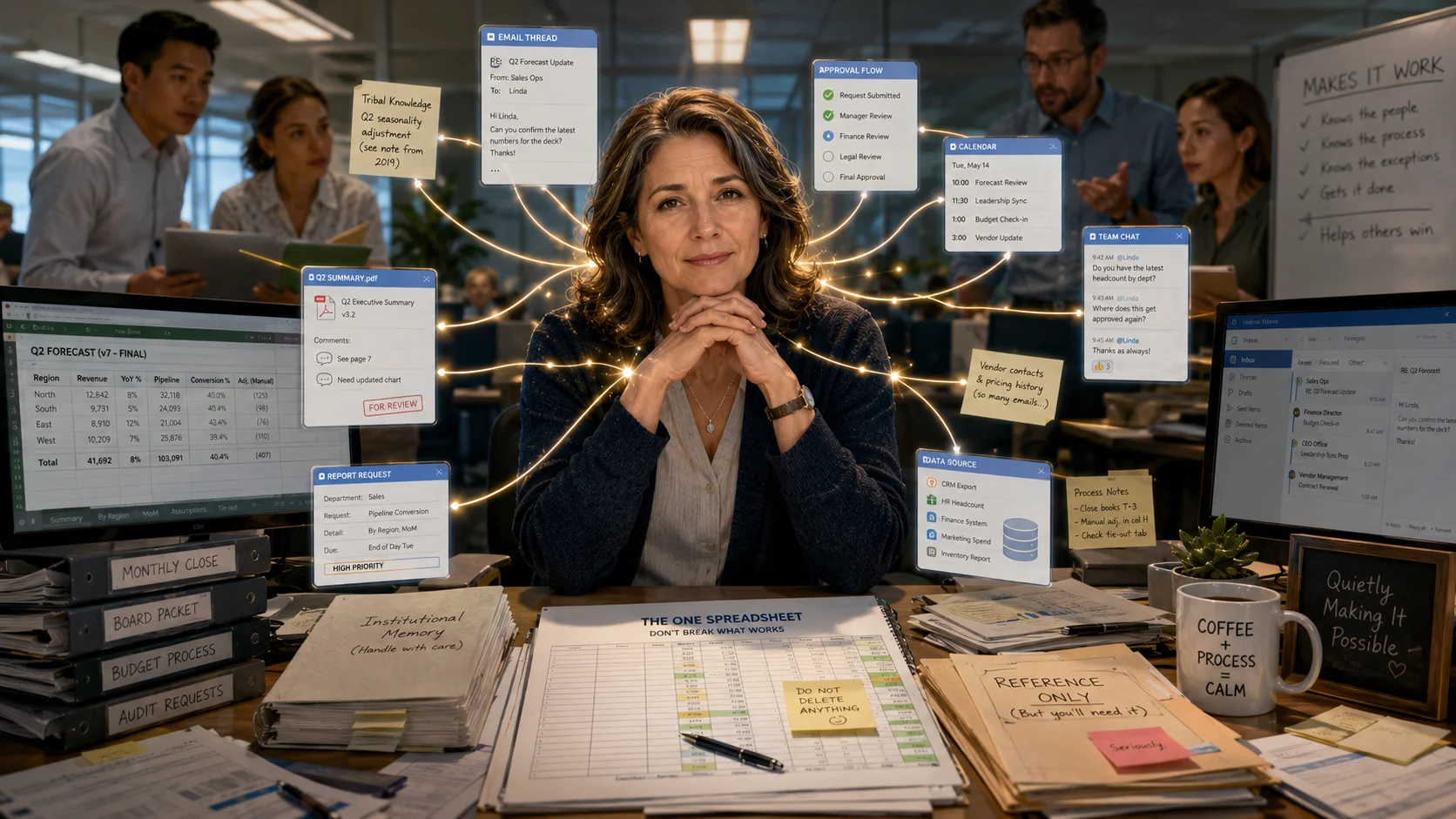

Ask where the spreadsheet became the system of record because the real system was too rigid.

Ask where someone spends Friday afternoon copying data from one place into another while quietly wondering whether this is what adulthood was supposed to be.

Ask where the business depends on one person named Linda who “just knows how it works.”

There is your agent opportunity.

Not because Linda should be replaced. God help us if we build a future where all the Lindas are discarded after holding the place together for twenty years.

The goal is compassion. The goal is to create a world where Linda is not the undocumented API for the whole company.

An agent should carry more of the repeatable burden so humans can carry the burden of judgment, care, taste, accountability, and responsibility.

That is the hopeful part.

The realistic part is this: if your idea is “chat with your data,” you are probably late. If your idea is “AI for coding,” you need a very sharp wedge. If your idea is “AI for finance,” you are walking into a field where very large players are already moving.

But if your idea is a precise workflow in a neglected industry, with a clear data object model, careful permissions, measurable evaluations, and a human approval path, you have struck gold.

There are still thousands of workflows too small for the labs, too weird for the big consultancies, too local for private equity, too domain-specific for a generic platform, and too valuable for the people doing them to ignore.

That is where builders inside companies should look.

Not at the glowing keynote stage.

Look at the Tuesday afternoon workflow.

Look at the thing no one brags about but everyone depends on.

Look at the process held together by email threads, tribal knowledge, stale PDFs, one heroic spreadsheet, and a Teams message that says, “Can someone check this before it goes out?”

The Next Waves Will Follow The Bottlenecks

Finance is not the end of this pattern. It is an early proof point.

The next waves of agentic AI will likely follow the bottlenecks that sit around large organizations.

Procurement and supply chain, because organizations need to source, buy, negotiate, track, receive, substitute, reconcile, and explain what happened.

Legal, compliance, and audit, because someone has to review what the business is doing, determine what is allowed, document why it was allowed, and prove later that the right controls were followed.

HR, talent, and learning, because companies are made of people, and people create workflows that are sensitive, messy, repetitive, consequential, and full of judgment.

But the lesson is not to chase each category as soon as it becomes fashionable.

The lesson is to ask where the bottleneck lives.

The money will keep moving toward work that has objects, rules, actions, approvals, exceptions, and measurable outcomes.

So should the builders.

The Better Whiteboard Question

So let’s go back to the whiteboard.

The room is still there. The soda can is empty. Someone has written AI Ideas at the top. Underneath it, someone has written:

Chatbot.

Leave it there for a moment.

Then draw a line beneath it and write a better question:

Where is Linda?

Then, under that, write the questions that matter:

What is the business data object Linda interacts with?

What systems does Linda use?

What actions does Linda take?

Who approves Linda’s work?

How does Linda and her team, and her boss know she did the right thing?

What happens when Linda is on vacation?

What happens when Linda makes a mistake?

That is when the room changes.

Because now you are no longer brainstorming AI features.

You are discussing work; where where real people are still waiting for the work to become lighter, cleaner, safer, and more humane. So they can get back to the job of transforming organizations, serving customers, and building a better world.

Once you find Linda: Build there.